Walk This Way: A Better Way to Identify Gait Differences

Osaka University researchers design a gait recognition method that can overcome intra-subject variations by view differences

Biometric-based person recognition methods have been extensively explored for various applications, such as access control, surveillance, and forensics. Biometric verification involves any means by which a person can be uniquely identified through biological traits such as facial features, fingerprints, hand geometry, and gait, which is a person’s manner of walking.

Gait is a practical trait for video-based surveillance and forensics because it can be captured at a distance on video. In fact, gait recognition has been already used in practical cases in criminal investigations. However, gait recognition is susceptible to intra-subject variations, such as view angle, clothing, walking speed, shoes, and carrying status. Such hindering factors have prompted many researchers to explore new approaches with regard to these variations.

Research harnessing the capabilities of deep learning frameworks to improve gait recognition methods has been geared to convolutional neural network (CNN) frameworks, which take into account computer vision, pattern recognition, and biometrics. A convolutional signal means combining any two of these signals to form a third that provides more information.

An advantage of a CNN-based approach is that network architectures can easily be designed for better performance by changing inputs, outputs, and loss functions. Nevertheless, a team of Osaka University-centered researchers noticed that existing CNN-based cross-view gait recognition fails to address two important aspects.

“Current CNN-based approaches are missing the aspects on verification versus identification, and the trade-off between spatial displacement, that is, when the subject moves from one location to another,” study lead author Noriko Takemura explains.

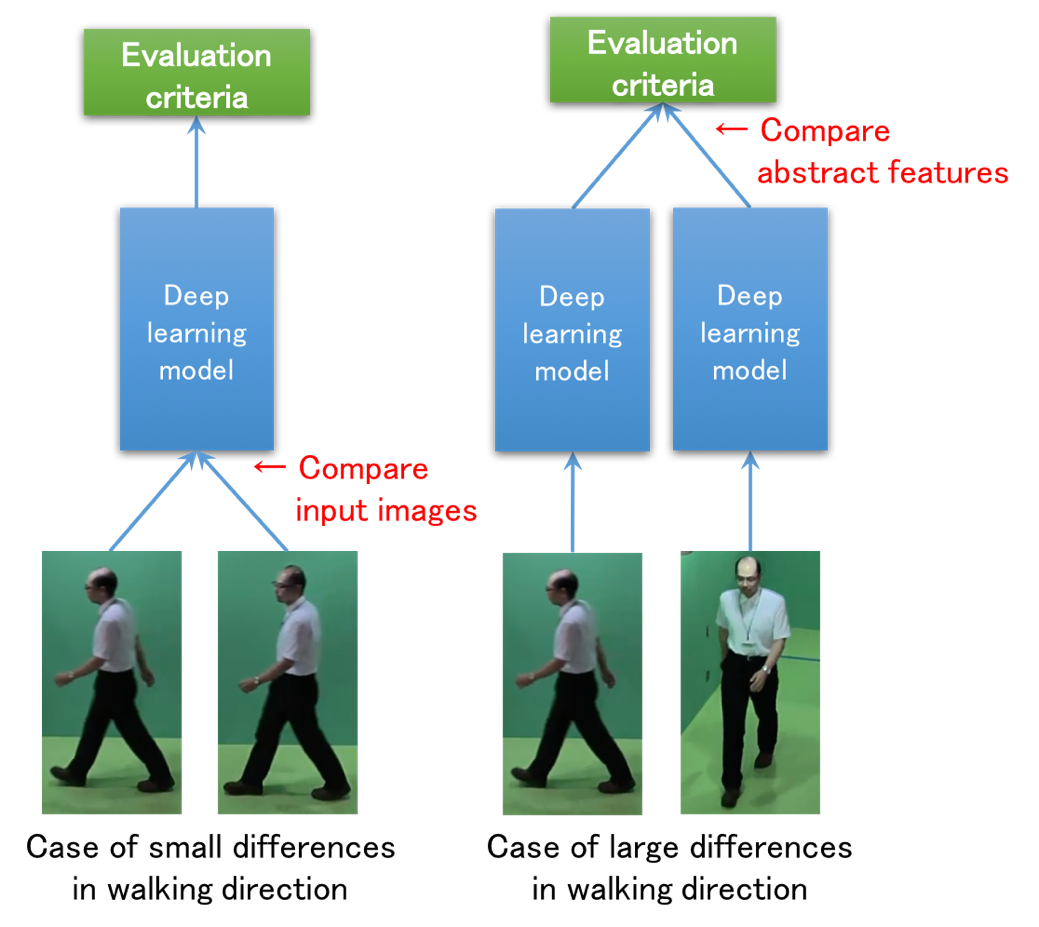

Considering these two aspects, the researchers designed input/output architectures for CNN-based cross-view gait recognition. They employed a Siamese network for verification, where an input is a pair of gait features for matching, and an output is genuine (the same subjects) or imposter (different subjects) probability.

Notably, the Siamese network architectures are insensitive to spatial displacement, as the difference between a matching pair is calculated at the last layer after passing through the convolution and max pooling layers, which reduces the gait image dimensionality and allows for assumptions to be made about hidden features. They can therefore be expected to have higher performance under considerable view differences. The researchers also used CNN architectures where the difference between a matching pair is calculated at the input level to make them more sensitive to spatial displacement.

“We conducted experiments for cross-view gait recognition and confirmed that the proposed architectures outperformed the state-of-the-art benchmarks in accordance with their suitable situations of verification/identification tasks and view differences,” coauthor Yasushi Makihara says.

As spatial displacement is caused not only by view difference but also walking speed difference, carrying status difference, clothing difference, and other factors, the researchers plan to further evaluate their proposed method for gait recognition with spatial displacement caused by other covariates.

Figure: Effective network architecture depending on walking direction (credit: Osaka University)

To learn more about this research, please view the full research report entitled " On Input/Output Architectures for Convolutional Neural Network-Based Cross-View Gait Recognition " at this page of IEEE Transactions on Circuits and Systems for Video Technology .

Related links